EDTECH

B2B PRODUCT

Redesigning CMS Behind

India's Largest Test Prep Platform

Aakash generates 50M+ content assets annually, yet its core creation tool was not built with user experience in mind. This led to increased turnaround time (TAT) and compromised content quality, impacting 7M students.

I conducted a usability heuristic audit, identified 40+ critical issues, and redesigned the core creation workflows to improve efficiency, consistency, and output quality.

PLATFORM

Dashboard design

DURATION

24 Weeks

MY ROLE

UX Researcher, Product designer

WHAT WE ACHIEVED

optimisation of content workflows in the dashboard

100+

Critical usability blockers resolved while handoff

40%

Reduction in TAT for creating questions and tests

THE PROBLEM

The tool that powers our tests

was the biggest obstacle to making them.

Aakash's internal CMS was the backbone of test content creation, yet it was built for engineers, not faculties. Teams were battling a fragmented, error-prone interface daily, creating invisible quality debt at scale.

No Goal or Wayfinding

Entering 120+ fields for a single paper felt endless. There was no step indicator, no progress feedback, no sense of "almost done", causing abandonment mid-flow and incomplete drafts.

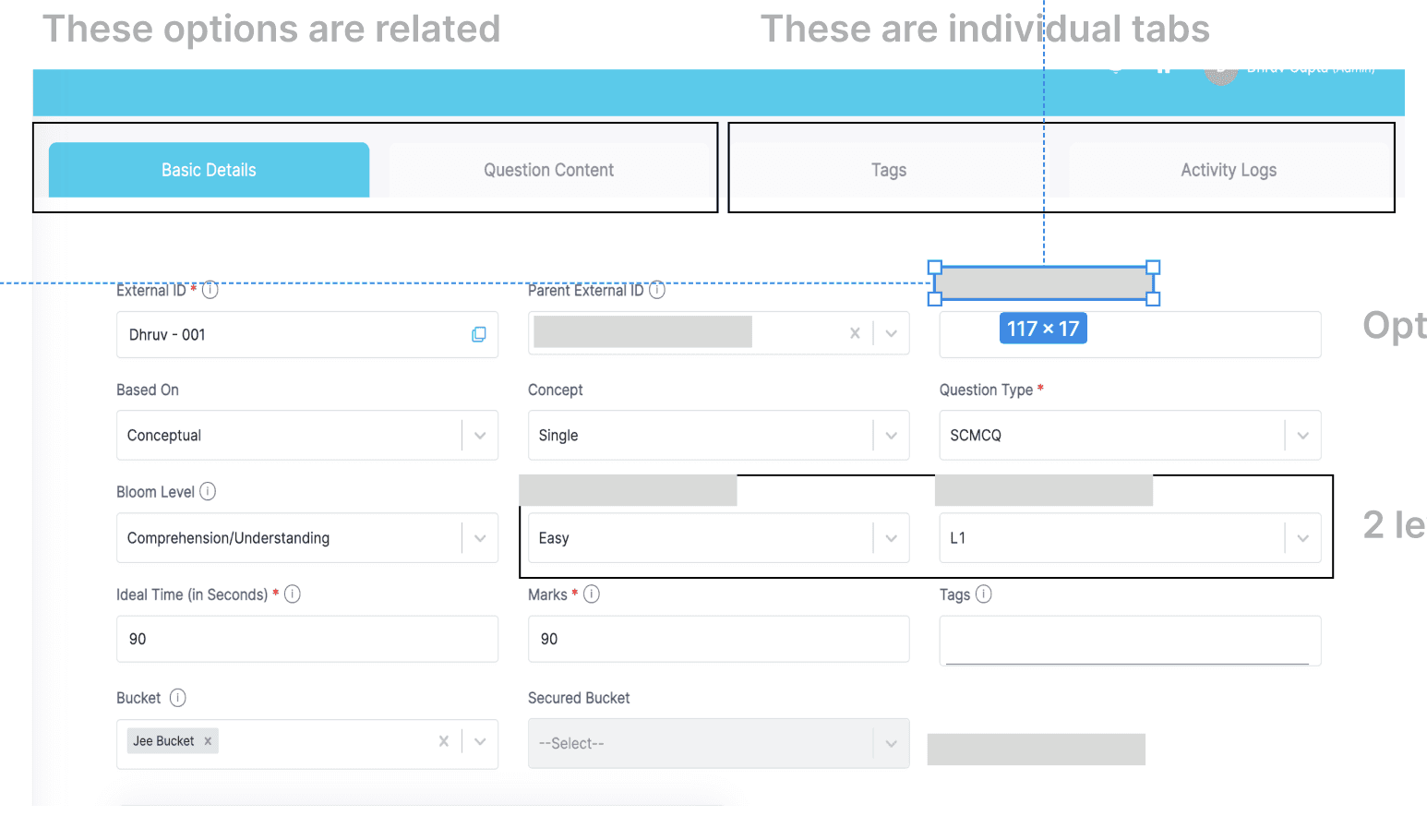

Fragmented Information

Architecture

Mandatory and non mandatory fields, associated topics, and test structure were spread across unlinked tabs. Authors context switched constantly with no sense of overall progress or completion.

No Error Prevention

Required fields failed silently. Authors discovered missing data only after submission, causing re entry loops and lost work. Error messages were absent or appeared at the wrong moment.

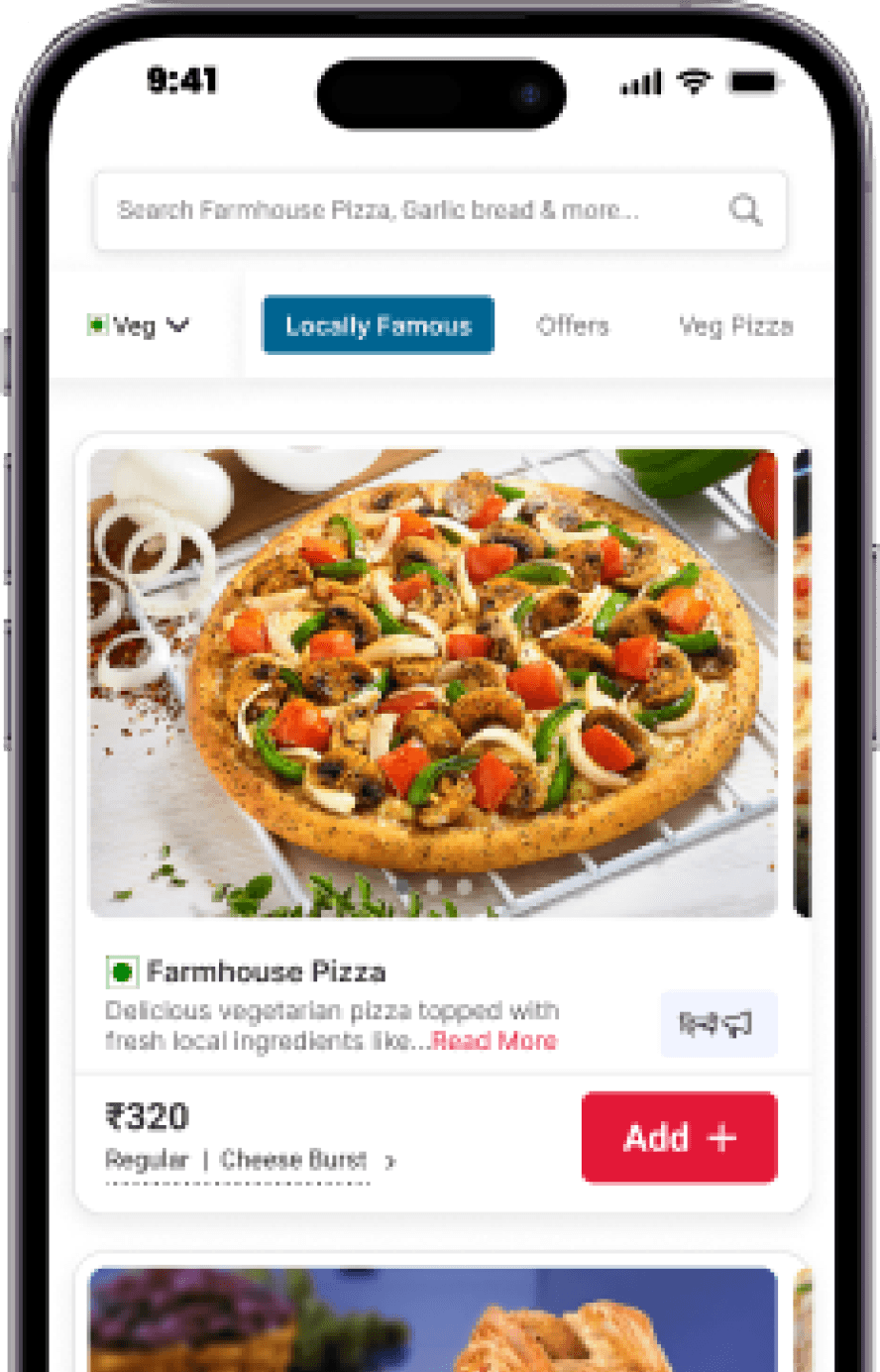

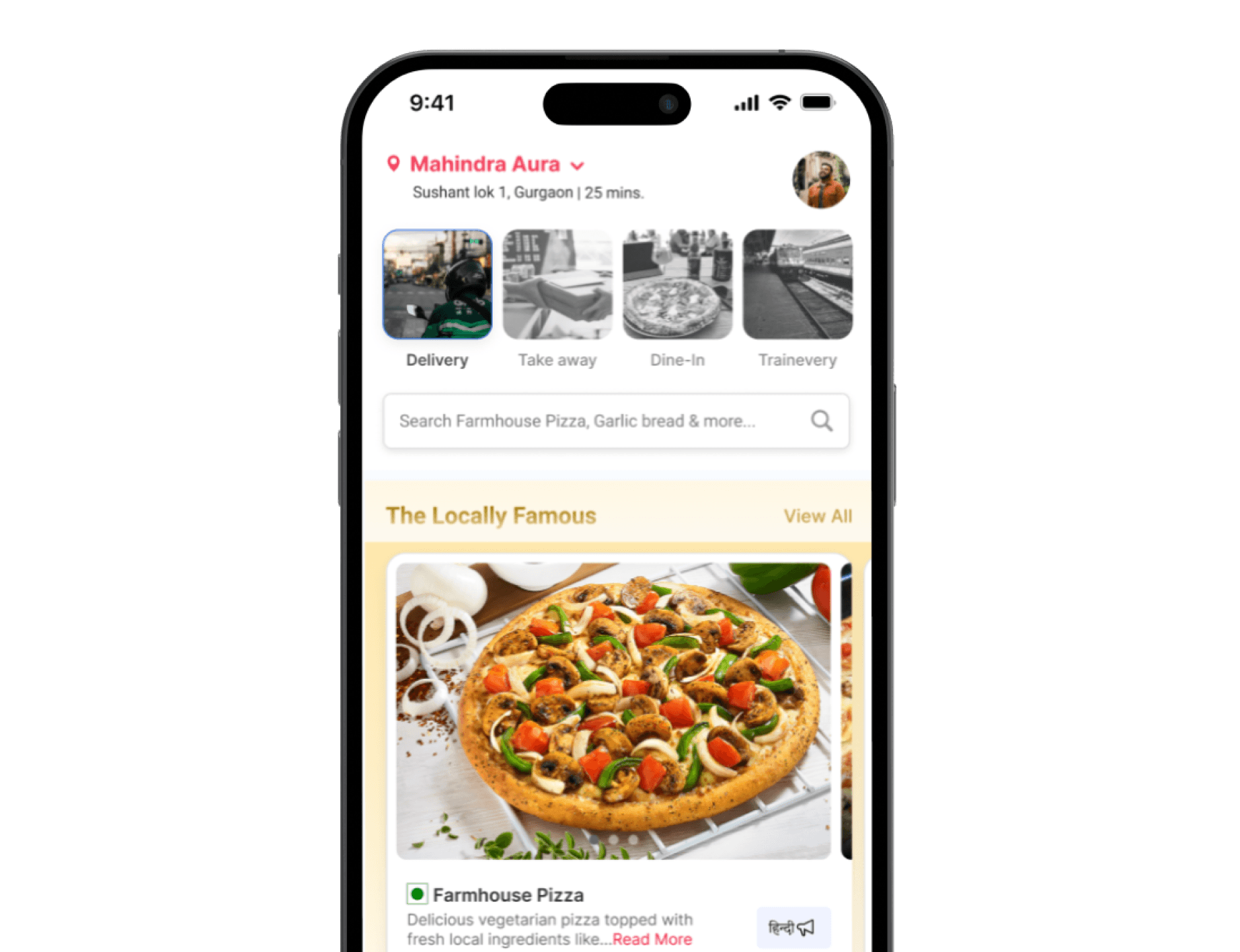

UPDATED DESIGN

Move the slider to see previous and updated designs

CONTEXT

A Broken flow in the tool

Ripples to students

Most UX problems affect users directly. This one was different, the users are faculties, department heads. But the people who really pay are students. Bad tooling leads to bad content resulting in low quality questions and delayed exams Understanding this cascade defined every design decision.

7M+

Students downstream

Every piece of test content served to Aakash students is managed through this CMS. A single broken flow ripples to thousands. Quality degradation at the tool level creates compounding errors at scale.

7 Days, 1 Test

Test Generation

Faculties spend 6-8 hours/day in the CMS, yet only 1 test was being generated in a week. Tool friction was not just slowing them down but also leading to irrelevant mapping and abandoned drafts blocking downstream publication

0

UX Iterations Since Launch

A 8 year old system which had never been evaluated through a user lens. Engineers built to spec, not to the mental models of users who'd use it 5 days a week. Accumulated UX debt with zero visibility.

40%

Time lost to friction

Research revealed nearly half of session time went in navigating confusion, re-entering data, recovering from form errors, and reopening tabs to find already entered values

THE CONTENT PIPELINE , AND WHERE IT BREAKS

Syllabus Planning

HOD defines test according to batch, subject, chapter scope

CMS Entry

Faculty creates questions followed by tests juggling through screen and countless fields — breaks here

Test Review

The reviewer has to be informed about reviews and review process is done on printed paper

Error correction

Error connection is slow and tidious. Reviewer suggests changes on printed papers, editor make those changes in CMS.

The Cascading Failure

Validation gaps → incomplete papers published → wrong question-syllabus mapping → students tested on content outside their batch scope. An invisible quality leak with no alert system.

DESIGN PROCESS

Audit first.

Design second.

The single best decision: we didn't open Figma for the first week. A structured heuristic evaluation gave every subsequent design decision an evidence base, not just taste.

01

Heuristic Audit

40+Violations

Severity Mapping

Each violation annotated with screen + heuristic

Severity-scored to build prioritised fix queue

02

Contextual Research

20+ Interviews

Shadowed 2 authors during live paper entry

Mapped flows to find abandonment points

03

IA + State Mapping

Card Sorting

IA design

Card sorting with stakeholders on field grouping.

Individual IA for complex flows

04

Design and Test

Agile

UAT

Designing a master structure and designing individual flows.

Field testing with 45+ faculties at 3+ institutes

VIOLATION MAPPING

40+ violations. Mapped, scored,

and ranked by impact.

Applied across current tool, 6 of 10 heuristics had critical-severity violations. with multiple UX laws failing

System Status

Error Prevention

Flexibility

Consistency

Aesthetic

Aesthetic

Hicks Law

Law of common Region

40+Violations

CRITICAL

Hick's Law - Too many buttons

The design has too many buttons leading to confusion and disruption in workflow.

CRITICAL

Goal Gradient Effect - No sense of progress or completion

56+fields with no sense of progress lead to fatigue. Authors reported more errors in the final third of entry, exactly where Goal Gradient predicts.

CRITICAL

Fitts' Law - Action buried at scroll bottom

Primary action placed at the absolute bottom of a variable length form. On longer papers this required scrolling past 60+ fields making it difficult to trace. This caused frustration and delays

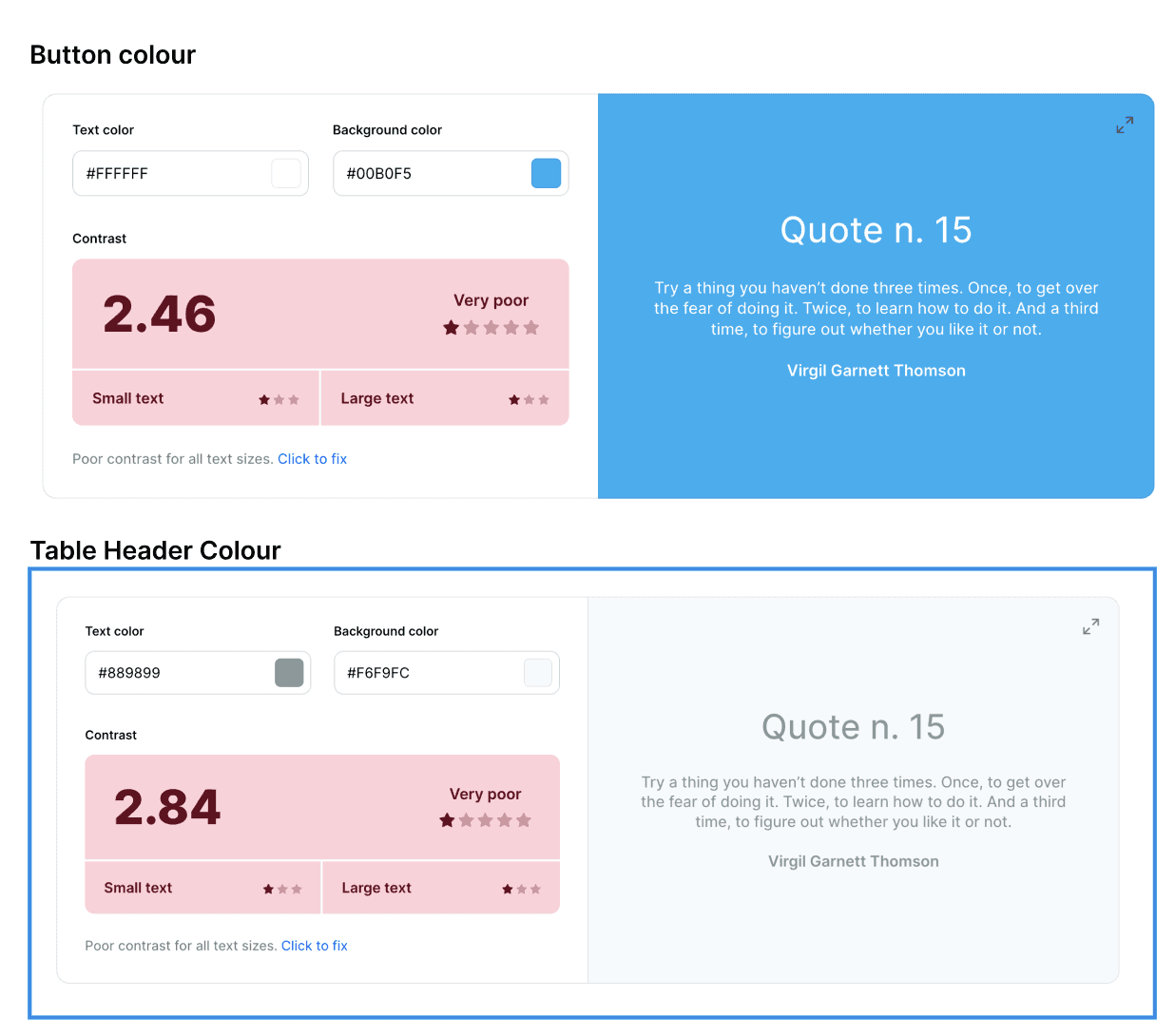

MEDIUM

Product colour - Contrast ratio fail

Master screen table rows and buttons failed for low-vision users and caused eye strain in some cases headache too